GPUs are only getting faster and data sets are becoming larger and richer. With the massive amounts of data required for deep learning workloads, it is recommended to have the right storage to support it. Getting the proper balance of storage performance, ease of management and cost is a must.

Below are some storage system attributes that are essential to support these workloads:

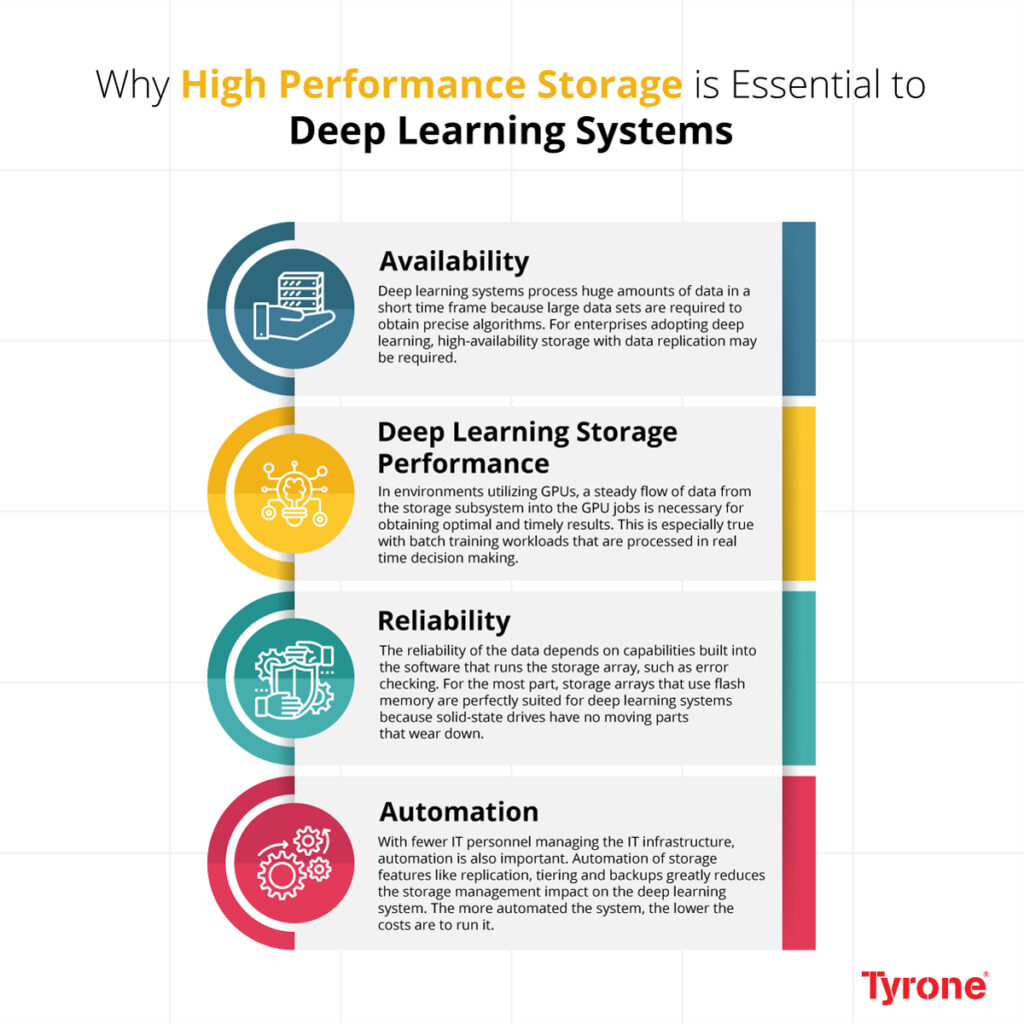

Availability

Deep learning systems process huge amounts of data in a short time frame because large data sets are required to obtain precise algorithms. If storage is not available, then the deep learning software cannot process its workloads. For enterprises adopting deep learning, high-availability storage with data replication may be required.

Deep Learning Storage Performance

Deep learning workloads cover a broad array of data sources, which present different I/O load attributes, depending on the model, parameters, and variables. Limiting the potential bottlenecks while querying data from storage is essential to maximizing throughput.

Reliability

If the data used is corrupt, deep learning systems cannot function. The reliability of the data depends on capabilities built into the software that runs the storage array, such as error checking. Another source of reliability is how dependable the operation of the storage hardware is.

Automation

Automation of storage features like replication, tiering and backups greatly reduce the storage management impact on the deep learning system. The more automated the system, the lower the costs are to run it.

Talk to Tyrone about how we can support your journey with products and solutions engineered to accelerate transformation and business growth.

Explore AI for Business: https://bit.ly/2yDtUFC